In 2026, businesses with clean, trusted and reliable data will lead the way. With large volumes of data and their growing scale, businesses need a tool to automate data pipelines while keeping compliance and governance under control. If you are looking to buy a data quality tool for your business, then this guide will help you make a better decision and answer your questions.

What comprises high data quality?

Data quality measures the extent to which a data set meets established data benchmarks for accuracy, consistency, reliability, completeness, and timeliness. A high data quality score ensures that the information is suitable for analysis, reporting, and decision-making.

Challenges

Even the best data quality tools can fall short if they aren’t implemented with the right governance, integration, and accountability structures.

Here are the most common challenges teams face when managing data quality programs:

• Integration and scalability issues

• Operational noise and maintenance overhead

• Ownership, accountability, and governance Gaps

Best practices for building a data quality framework

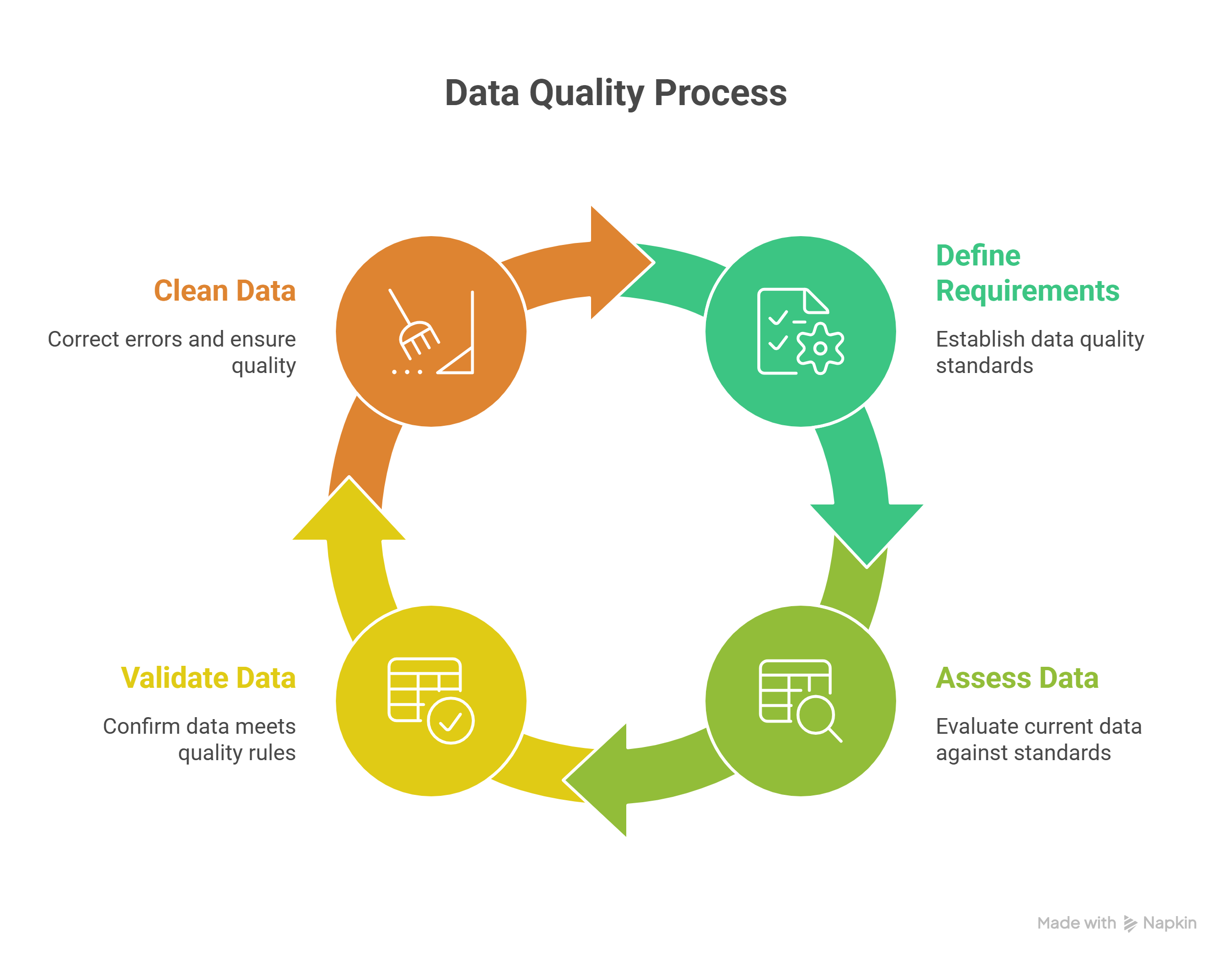

The data quality process uses various strategies to ensure accurate, reliable, and valuable data throughout the data lifecycle.

1. Requirements: Define the necessary data quality standards.

2. Assessment & Analysis: Evaluate current data against the defined requirements.

3. Validation: Confirm that data meets quality rules.

4. Cleaning & Assurance: Correct data errors and ensure ongoing quality.

What are data quality tools?

Data quality tools are software solutions that help teams maintain accurate, complete, and consistent data across systems and databases.

In simple terms, they act like automated quality control for your data pipelines, continuously checking whether the data flowing into your dashboards, analytics, and AI models is clean, reliable, and ready to use.

According to Gartner, these tools “identify, understand, and correct flaws in data” to improve accuracy and decision-making. For fast-growing SaaS companies, this means fewer data silos, reduced compliance risks, and more trustworthy business insights.

What do data quality tools do?

Data quality management tools help organizations keep their data accurate, consistent, and reliable across systems.

They do this by automatically checking, analyzing, correcting, and monitoring data before problems spread to reports, operations, or AI systems.

At a high level, these tools:

- Validate data to ensure it follows business rules and correct formats.

- Profile data to detect patterns, anomalies, and hidden issues.

- Standardize data so formats and naming are consistent across platforms.

- Detect duplicates and match records to avoid multiple versions of the same entity.

- Monitor data continuously to identify issues like missing data, schema changes, or delays.

Top data quality tools for 2026

1. Informatica Data Quality

Informatica Data Quality and Observability is a software designed to help organizations assess, monitor, and manage the quality of their data across various sources and environments. The software provides capabilities for data profiling, cleansing, standardization, matching, and validation to ensure data accuracy and consistency.

Best for: Large organizations that require robust, customizable data profiling and compliance-grade data governance.

2. Ataccama ONE

Ataccama ONE is a software platform focused on data management and governance. It enables organizations to automate data quality monitoring, data profiling, mastering, and cataloging across multiple systems. The software provides features for data integration, metadata management, and policy enforcement while supporting both structured and unstructured data sources.

3. DQLabs Platform

DQLabs is an automated, modern data quality platform that delivers reliable and accurate data for better business outcomes. DQLabs automates business quality checks and resolution using a semantic layer to deliver “fit-for-purpose” data for consumption across reporting and analytics.

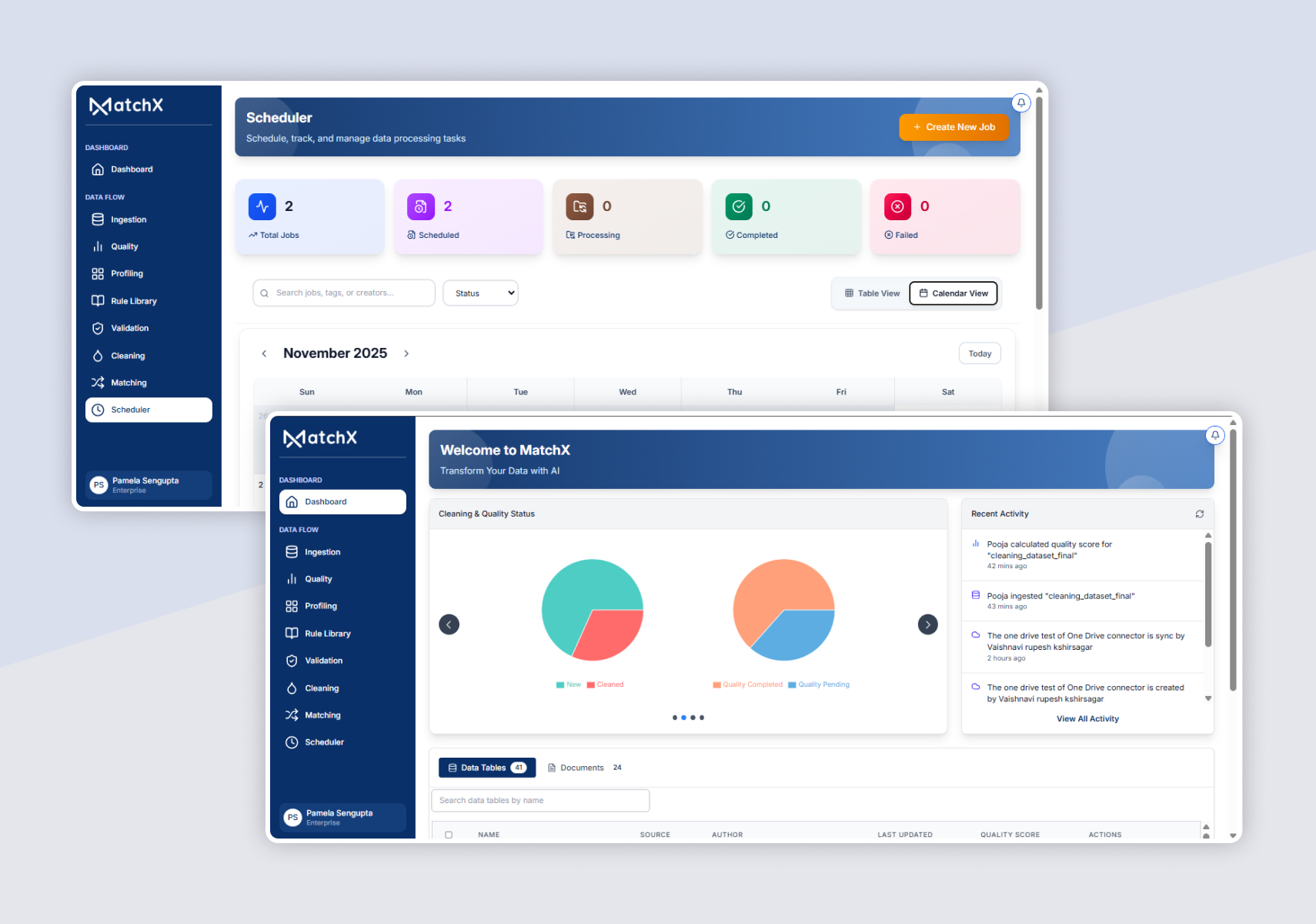

4. MatchX

MatchX is an AI-powered data quality and data matching platform designed to help organizations clean, connect, and govern their data across multiple systems and documents. It automates the process of identifying duplicates, correcting inconsistencies, linking related records, and ensuring data accuracy so that businesses can rely on trusted data for analytics, AI, and operational decisions.

5. Metaplane

Metaplane is a lightweight data observability platform designed for analytics teams that rely on tools like dbt, Snowflake, and Looker. It automatically detects anomalies in metrics, schema, and data volume, and alerts teams before bad data impacts decision-making.

Why MatchX over other standalone data quality tools?

Most standalone data quality tools only detect data issues. MatchX goes further by automating, connecting, and intelligently matching data across systems.

Key advantages of MatchX:

1. End-to-End Data Platform

Most tools only profile or validate data. MatchX manages the full lifecycle ingestion, profiling, cleaning, matching, linking, and governance in one platform.

2. Advanced Matching Capabilities

MatchX supports exact, fuzzy, probabilistic (Splink), and AI-assisted matching. It accurately resolves duplicates and links records across complex systems.

Most standalone data quality tools only detect data issues. MatchX goes further by automating, connecting, and intelligently matching data across systems.

• Automated SchedulingRun data profiling, cleaning, and matching jobs automatically on scheduled intervals, ensuring continuous data quality without manual effort.

• Data LinkingMatchX links related records across different systems and datasets, creating a connected view of customers, vendors, products, or documents.

• Hierarchical MatchingSupports multi-level matching such as parent–child relationships (company → subsidiary, category → product, etc.), helping manage complex enterprise data structures.

3. Structured + Unstructured Data Support

Unlike traditional tools, MatchX processes both structured data and documents (PDFs, images). This enables document-to-data matching such as invoices, records, or forms.

4. AI-Powered Automation

MatchX uses AI for quality scoring, rule generation, and anomaly detection. This reduces manual rule management and accelerates data preparation.

5. Governance and Compliance

Built-in role-based access, approval workflows, audit trails, and data lineage ensure transparency and compliance for regulated industries.

6. Visual Transparency

Side-by-side comparisons, match confidence scores, and dashboards make data validation easier for both technical and business users.

7. Faster Deployment

MatchX offers no-code rule creation and simple integrations. Organizations can start improving data quality in days instead of months. (MatchX - AI-Powered Data Matching and Quality Platform, 2026)

8. Automated Scheduling

Automatically schedule data profiling, cleaning, and matching jobs. This ensures continuous data quality without manual execution.

Conclusion

Data quality is the foundation of trusted and reliable analytics, AI, and business decisions. As data volumes grow, organizations need tools that can automatically detect, correct, and monitor data issues across systems. Modern data quality platforms go beyond simple validation by enabling automation, governance, and intelligent data matching.

If you are looking to buy a data quality tool for your business, then it’s the right time. MatchX stands out by combining data profiling, cleaning, matching, and governance in one platform, helping organizations maintain clean, connected, and trusted data for better insights and operations.

FAQ’s

1. What features should businesses look for in a data quality tool?

Key features include data profiling, validation, cleansing, duplicate detection, monitoring, automated workflows, governance capabilities, and integration with existing systems.

2. What is the difference between data quality and data observability?

Data quality focuses on improving and maintaining the accuracy and consistency of data, while data observability monitors the health of data pipelines by tracking metrics like freshness, volume, and schema changes.

3. How does AI improve data quality management?

AI helps automate anomaly detection, rule creation, and data matching. This reduces manual effort and allows organizations to detect and correct data issues faster and more accurately.

4. Who typically uses data quality tools?

Data engineers, data analysts, data stewards, enterprise architects, and compliance teams commonly use data quality tools to ensure reliable data for analytics, reporting, and operational systems.

.png)

.png)

.png)