Agentic AI has reached a familiar moment in the enterprise technology cycle. What began as an intriguing capability has moved rapidly through experimentation and is now pressing against the realities of production.

Early efforts have focused on exploration. Teams built agents that could reason through tasks, call tools, and produce impressive results in controlled environments. These experiments delivered valuable learning, but they also revealed the limits of novelty. Outside the sandbox, reliability falters, and cost is unpredictable. This is where many promising initiatives stall.

It is a consequential shift now. Agentic AI is moving from isolated demonstrations to systems that must integrate with enterprise architecture, respect security boundaries, and operate with consistent outcomes.

This article examines what it takes to make agentic AI production ready. It looks beyond frameworks and prototypes to the architectural patterns, controls, and operating practices.

1. The Agentic AI Paradigm Shift

Earlier, the user remained responsible for structuring the task, guiding the system, and validating the outcome.

Agentic systems operate differently. They pursue a goal rather than respond to a prompt. Given an objective, an agent decomposes the task, selects tools, evaluates intermediate results, and adjusts its strategy until the goal is met, or the system reaches a defined boundary.

This capability emerges from several technical characteristics.

Agents can query APIs, search for internal knowledge repositories, execute code, or interact with enterprise software systems.

Multi-step reasoning enables an agent to evaluate results, revise assumptions, and iterate through a sequence of operations. Frameworks such as “React” demonstrate how reasoning and action can occur in cycles rather than isolated responses.

Persistence allows agents to maintain context across tasks. They can remember previous interactions, maintain state, and continue work that unfolds over extended time horizons.

These capabilities move AI from the role of assistant to that of operator.

The scale of this shift is visible in adoption data. By mid 2025, 72% of enterprises reported using AI agents in some capacity, with 40% already running multiple agents in production environments.

Growth is accelerating. In the first half of 2025 alone, the number of enterprise agents deployed grew by 119%, while the actions executed by those agents increased by roughly 80% month over month across sales and service workflows.

Industry forecasts suggest that this transition will soon become structural rather than experimental. Gartner estimates that 40% of enterprise applications will include task-specific AI agents by 2026, up from less than 5% in 2025.

For enterprises, the implication is significant. Many business processes consist of structured but multi-step workflows. Market research, regulatory analysis, claims processing, supply chain coordination, and internal reporting all involve gathering information, applying logic, and executing actions across systems. Agentic architectures enable these workflows to be partially automated in ways earlier generative systems could not.

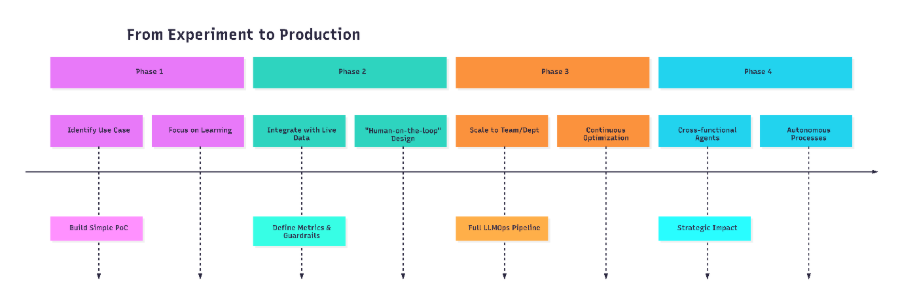

2. The Enterprise Journey: From POC to Production

Most enterprises begin their agentic AI journey inside controlled environments. These early stages emphasize learning rather than scale.

Phase 1: Experimentation and Scoping

In the first phase, teams explore use cases that are informative but low risk. Typical examples include automated report generation, internal knowledge retrieval, or workflow assistance for research teams.

Adoption data reflects this experimental stage. Surveys of enterprise IT leaders show that 62% of organisations are currently experimenting with AI agents, while only about 23% have moved into scaled deployments across business functions.

Teams often rely on orchestration frameworks such as LangChain, LlamaIndex, or AutoGen to construct early prototypes. These tools simplify tool integration, reasoning loops, and context management.

Within weeks, organisations can assemble agents capable of performing multi-step tasks that once required manual effort.

However, prototypes reveal as many weaknesses as strengths. Agents may generate convincing but incorrect outputs. Tool calls may fail silently. Long reasoning chains can inflate computational costs.

These lessons define the boundary between demonstration and operational systems.

The most important success metric at this stage is learning. Teams gain familiarity with model behaviour, system orchestration, and evaluation techniques. Without this phase, production efforts later become fragile and difficult to maintain.

Phase 2: Operational Integration

Once a promising use case emerges, attention shifts to integration.

Agents must connect to enterprise data sources, internal APIs, authentication systems, and workflow tools. Security teams evaluate access boundaries. Data governance teams assess how information flows through the system.

This stage often reveals hidden complexity. Modern enterprise processes frequently involve dozens of connected endpoints and services, and orchestration becomes the central challenge rather than a model capability.

Success metrics evolve as well. Instead of novelty, organisations measure latency, reliability, and operational cost.

Phase 3: Production Deployment

Production systems require predictable performance.

At this stage, organisations introduce structured monitoring, automated evaluation pipelines, and operational guardrails. The agent becomes a component within a broader system architecture rather than an isolated program.

This transition is now happening at scale. Research indicates that 96% of enterprise leaders plan to expand their use of AI agents within the next twelve months, reflecting the rapid movement from pilot programs to operational deployments.

Machine learning models once moved through the same path from experimentation to MLOps pipelines. Agentic AI now demands an equivalent operational discipline.

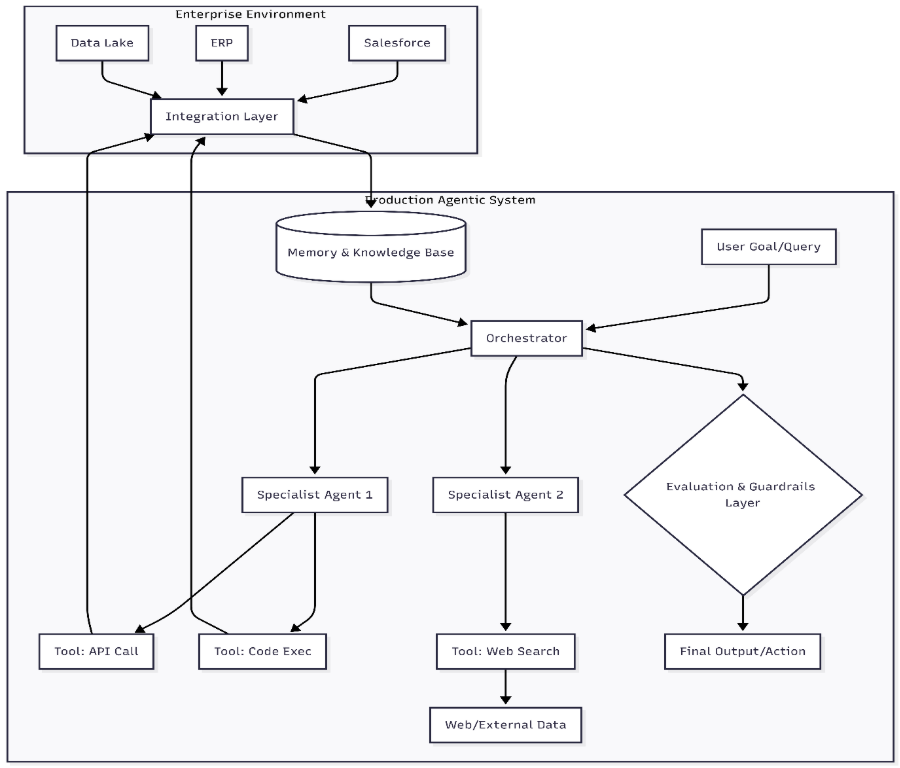

3. The Core Architecture of a Production Agentic System

A production-grade agentic system resembles a layered platform rather than a single model.

At the centre sits the orchestrator, also known as the agent controller. This component receives a goal, decomposes the task into steps, and coordinates interactions between specialised agents and external tools.

Surrounding the orchestrator are specialist agents and tools. These components focus on defined capabilities such as data retrieval, code execution, financial analysis, or document parsing.

The architecture increasingly resembles distributed software systems rather than single model deployments. Industry data shows that many enterprise processes now involve 50 or more integrated services or endpoints, requiring agents to coordinate across complex technical environments.

Another essential layer involves memory and knowledge management.

Short-term memory maintains conversational context during task execution. Long-term memory stores structured knowledge that allows agents to retrieve historical data or previous results. Vector databases support semantic search across documents, while graph databases capture relationships between entities within enterprise knowledge systems.

Without structured memory, agents repeatedly rediscover information and produce inconsistent reasoning paths.

The architecture also requires an evaluation and guardrails layer.

Finally, the system connects to the enterprise infrastructure through an integration layer.

APIs, authentication systems, workflow engines, and legacy platforms comprise the operational environment in which agents perform tasks. Integration determines whether the system becomes a useful operational tool or remains an isolated experiment.

4. Navigating the Implementation Minefield

Despite strong progress, deploying agentic AI at scale poses several challenges distinct from those of traditional software systems.

Reliability and Hallucination

Large language models generate outputs based on probability rather than strict rules.

When agents operate autonomously across multiple reasoning steps, small inaccuracies can compound into significant errors.

Organisations address this risk through structured prompts, verification steps, and retrieval-based knowledge systems that ground responses in trusted data.

Security and Governance

Autonomous agents interacting with enterprise systems raise sensitive questions.

Recent industry surveys show that 86% of security leaders believe AI will increase the sophistication of social engineering attacks, while 82% expect it to complicate threat detection and persistence techniques.

As a result, security architectures now enforce role-based permissions, use encrypted data channels, and maintain detailed audit logs that record every agent's actions.

Cost Management

Agentic systems can generate large volumes of model calls during reasoning loops. If left uncontrolled, this behaviour leads to unpredictable computational expenses.

Companies implement limits on reasoning depth, monitor token usage, and route simpler tasks to smaller models to maintain cost stability.

The stakes are substantial. Studies indicate that agentic automation could reduce operational costs by up to 43% in certain enterprise processes, making cost governance essential to realising those benefits.

Organizational Change

The technical challenges are only part of the transition.

Employees accustomed to deterministic software may struggle to trust probabilistic systems. At the same time, workflows must evolve so that humans supervise systems rather than execute every step themselves.

Training and communication become critical elements of successful adoption.

5. Best Practices for a Sustainable Agentic AI Factory

Enterprises that succeed with agentic AI tend to adopt a disciplined operating model rather than rely on isolated projects.

A common starting point is a tightly scoped pilot with a measurable return on investment. When teams select problems with clear economic value, the technology moves from curiosity to an operational tool.

Evidence suggests that this discipline matters. Organisations implementing structured agent programs report average returns on investment exceeding four times their initial spend, with payback periods of roughly eleven months.

Evaluation frameworks should be implemented from the beginning. Automated testing across representative scenarios helps detect failures early and provides objective benchmarks for improvement.

Another important principle concerns the transition from human-in-the-loop to human-on-the-loop oversight.

In early deployments, humans approve each agent's decision. As reliability improves, humans shift toward supervisory roles, monitoring system performance, and intervening only when anomalies occur.

Operational infrastructure also evolves. Traditional MLOps pipelines expand into LLMOps or AgentOps environments that track prompt versions, monitor agent performance, and automate deployment updates.

The result is an internal capability sometimes described as an agentic AI factory. Teams design, deploy, evaluate, and refine agents through repeatable engineering processes.

6. The Future: Autonomous Enterprises

The current generation of agentic systems still operates within narrow boundaries. Agents perform defined tasks under structured supervision.

However, the trajectory points toward deeper integration with enterprise decision-making.

Industry forecasts suggest that by 2028, up to 1/3rd of enterprise workflows may be coordinated by collaborative networks of AI agents, rather than single applications.

In the near term, agents will function as operational colleagues. They will coordinate research, analyse datasets, draft regulatory documentation, and manage repetitive workflows that span multiple systems.

Over time, clusters of agents may manage entire operational processes. Supply chain optimisation, procurement analysis, compliance monitoring, and customer support coordination could operate through networks of specialised agents supervised by human managers.

The long-term vision is not a fully automated enterprise but a self-optimising organisation where intelligent systems continuously analyse performance, identify inefficiencies, and recommend structural improvements.

Human leadership remains responsible for strategy and judgment. Agents become the operational infrastructure that executes and refines complex processes at scale.

Conclusion

Agentic AI marks a transition from interactive tools to autonomous systems that perform structured work inside the enterprise.

The challenge facing organisations is no longer technical curiosity. It is operational execution.

Successful deployment requires architectural discipline, rigorous evaluation, strict governance, and thoughtful integration with existing workflows.

When these elements align, agentic systems evolve from experimental prototypes into dependable components of enterprise infrastructure.

For leaders guiding this transition, the opportunity extends beyond efficiency gains. Properly implemented, agentic AI enables organisations to automate complex knowledge work and build operating models that adapt faster than traditional systems.

The movement from experiment to production represents more than technological progress. It signals the emergence of a new operational layer in modern enterprises.

See how PromptX applies these principles to deliver smarter, context-aware enterprise search.

.png)

.png)

.png)